NFT marker with product packaging

In this experiment we attempt to create a product packaging Natural Feature Tracker (NFT) in order to show that product packaging can be acquired for use in an Augmented Reality scene on a mobile device.

Our ultimate aim is to superimpose an AR object on to product packaging in a 'natural' environment: in this case, baby products on a shelf in a supermarket (Waitrose).

Setting up

Learning from our first experiments with NFT markers, we hypothesised that clear, identifiable borders on a candidate image affected the confidence level of creating a usable NFT marker.

For our experiment to be successful, and show this idea is viable in everyday operation, we cannot rely on product packaging to be isolated within any borders; however we do need to find a way of dismissing the lack of borders as the reason why NFT marker acquisition failed.

With this in mind, our experiment needs to have control groups to test this hypothesis:

- Product packaging with a black border

- Product packaging with a white border

- Product packaging with a neutral (grey) border

If we don't get hits on product packaging with borders, our experiment fails immediately!

We put together this short video to explain our reasoning:

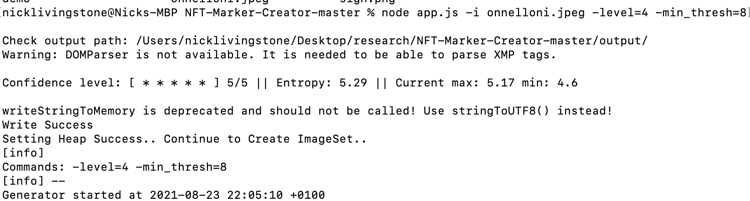

Having captured usable images, in that they represent both the lighting and resolution that reflects everyday usage, we put them through the command line NFT creator.

First attempt - vegetable cannelloni packaging

We cropped the best captured image to a black border:

In this first attempt, we have a confidence level of 5/5!

- Product packaging AR demo 1: box - test by pointing your mobile device at the captured image above.

The conclusion is that the better the mobile device, the better the chances of success (also, our hypothesis that borders matter is proven wrong)

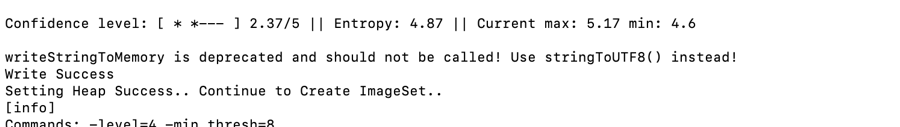

Second attempt - a jar of veg and chicken noodles

In this experiment we test recognising an NFT marker on a curved surface in varying lighting conditions

We cropped out the label from the jar, hoping that would provide sufficient complexity without the potential conflict of background detail (e.g. the lid of the jar).

However, the image processing only gave us a confidence level of 2.37/5 - not great. So we processed the full jar image as well, and the confidence level improved to 3.07/5 - a 14% jump.

In both demos, we placed an orange cube and a grey tablet in front of the jar, superimposed over the label, in order to gauge the correct positioning and orientation of the AR objects.

As you you can see from the video, we had some success. However, during development, the objects were very difficult to orient consistently - this was eventually achieved through trial and error.

In the live demo, the application had difficulty recognising the label as a marker, and once it did, the position of the superimposed objects was unstable. Both issues appear to be exacerbated by inconsistent lighting.

Using more complex assets: a model with realistic textures; and video embedding

Tomato complex model

In this experiment we place a model of a tomato with realistic textures on product packaging.

- Product packaging AR demo 4: tomato - using the Hiro marker

- Product packaging AR branded: animated tomato - using the vegetable cannelloni packaging

- Product packaging AR branded: animated tomato - using the Veg & Chicken Noodles jar

As can be seen from the videos above, the least consistent experience is the jar based marker. We found that the jar is very sensitive to small, almost imperceptible changes in lighting conditions.

Embedded video shown on packaging

In this demo we sought to embed a video within a 'hole in the wall' superimposed on the vegetable cannelloni packaging.

First, we ensure that our video will embed in an AR scene, using the previous Hole in the wall experiment as the basis:

- Hole in the Wall with embedded Hipp video - using Hiro marker

This demonstrates that the Hipp video works, with the caveat that it will require a play button - because autoplay videos are not permitted on the iOS browser.

Next, we superimposed the video on the vegetable cannelloni packaging in an AR scene.

- Product packaging with embedded Hipp video - using the vegetable cannelloni packaging as an NFT marker

The demo showed the same issues as before - jitter due to camera shake, lighting conditions and an older device struggling to keep up with the processor load. However, these issues appeared to be less apparent than in previous experiments.

Further experimentation is required to consistently position the superimposed video.

Next steps

Once both assets can be satisfactorily positioned relative to the packaging we will combine them into one AR scene.

However, this may not pan out - a single NFT marker takes a lot of processing power, leading to battery drain, and in the worst case, may fail to show either asset.

In addition, it is worth considering that products are not as a rule displayed individually, and this could cause unexpected behaviour with NFT markers. For example, with multiple Hiro markers, the asset is only shown once - 'hopping' between the markers as each one is acquired in turn.

Note, as a bonus, that the hopping video above demonstrates nicely that different sized markers give correspondingly scaled AR objects.

Experiments continue: in part 2 we experiment further with superimposing a stable videos and animated objects on an NFT marker.